Artificial Intelligence (AI) has emerged from the labs of academia and the R&D departments of tech giants to become a transformative force in nearly every aspect of our lives. It powers the algorithms that recommend what we buy and watch, diagnoses diseases with remarkable accuracy, and informs critical decisions in fields as diverse as finance, law enforcement, and human resources. As AI’s power and autonomy grow, however, so too does a profound ethical vacuum. The rapid pace of technological development has outpaced the legal and moral frameworks needed to govern it, creating a new set of risks that threaten to undermine trust, perpetuate injustice, and erode fundamental human rights.

In response to this urgent ethical imperative, governments and international bodies are now racing to establish a new legal framework for the responsible development of AI. This is a high-stakes legal battlefront that will determine the future of a technology with the power to either uplift humanity or become a source of new harms. The central conflict is a delicate balancing act: how to foster innovation without compromising on core principles of fairness, accountability, and transparency. This article will provide a comprehensive guide to the ethical challenges that are driving the push for legislation, the key principles of emerging legal frameworks, the diverse global approaches to regulation, and the profound implications for businesses and society.

Why AI Needs a Moral Compass

The call for ethical AI legislation is not an abstract academic exercise; it is a direct response to a growing list of real-world harms. These ethical dilemmas arise from the very nature of AI itself.

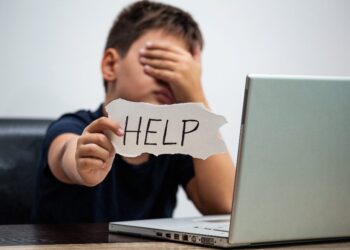

- Algorithmic Bias and Discrimination: AI systems are trained on vast datasets of human behavior. If that data reflects existing societal biases—in hiring, lending, or criminal justice—the AI will not only learn those biases but also amplify them, leading to discriminatory outcomes on a massive scale. For example, an AI tool used for resume screening may inadvertently favor applicants from a specific demographic, perpetuating inequality in the workforce.

- The “Black Box” Problem: Many of the most powerful AI models, particularly deep learning systems, operate as “black boxes” whose decision-making process is inscrutable, even to their creators. When a loan is denied or a medical diagnosis is made by an AI, the lack of a clear, explainable reason for the decision makes it impossible for a human to audit the system, challenge its judgment, or even understand how a mistake was made.

- Privacy and Data Governance: AI systems require immense amounts of data to function. The collection and use of this data, if not properly governed, can lead to massive privacy violations and the misuse of personal information. Without a clear legal framework, there is a risk that AI systems could be used for mass surveillance or to create a detailed, real-time profile of every individual.

- Accountability and Liability: When an autonomous vehicle causes an accident or a medical AI provides a faulty diagnosis that leads to harm, who is responsible? The developer? The company that deployed the AI? The lack of a clear legal framework for accountability in these situations poses a serious legal risk and a moral dilemma.

- The Existential Threat: On the most extreme end, some ethicists and technologists warn of the existential risks of uncontrolled AI, including the potential for autonomous weapons systems that can make life-or-death decisions without human intervention.

Key Pillars of Ethical AI Legislation

In response to these challenges, a new legal framework is emerging, built on a set of core ethical principles that aim to guide the responsible development and deployment of AI.

A. Transparency and Explainability:

The “black box” problem is a central concern for regulators. New legislation is pushing for AI systems to be auditable and their decisions explainable to a human. This principle, often referred to as the “right to explanation,” gives individuals the right to request a clear, human-readable explanation for a decision made by an AI system that affects them. This would, for example, require a bank to explain why its AI system denied a loan, or a company to explain why an AI tool rejected a job applicant.

B. Algorithmic Bias and Fairness:

Legislation is also focusing on the need for AI systems to be fair and free from bias. This requires developers to implement a number of new measures.

- Fairness Audits: New laws could require companies to conduct regular “fairness audits” of their AI systems to test them for bias against a variety of demographic groups.

- Data Governance: The legal framework will need to mandate that AI developers use clean, diverse, and representative datasets for training. This would help to prevent a system from learning and amplifying existing societal biases.

- Impact Assessments: Legislation could require a mandatory “AI impact assessment” before an AI system is deployed, a process that would require a company to analyze the potential for its AI to cause harm or discrimination.

C. Privacy and Data Governance:

The ethical development of AI is inextricably linked to the protection of privacy. New legislation is reinforcing the idea that strong data governance is a prerequisite for ethical AI.

- Informed Consent: A key legal requirement will be for companies to get clear, informed consent from individuals for the collection and use of their data for AI training. This would give individuals more control over how their data is used.

- Data Minimization: This legal principle, a cornerstone of privacy laws like GDPR, will be a key part of AI legislation. It requires companies to collect only the data that is necessary for a specific purpose, which would help to reduce the amount of personal data that is used for AI training.

- Data Security: New laws will also set a high bar for data security in AI systems, with significant fines for companies that fail to protect user data from hackers and breaches.

D. Accountability and Liability:

The legal framework will need to provide a clear answer to the question of who is responsible when an AI system causes harm.

- Strict Liability: In some cases, such as with autonomous vehicles, the legal system may need to move toward a strict liability model, holding the manufacturer responsible for any harm caused by the product, regardless of negligence.

- Transparency in the Supply Chain: A new legal framework will also need to address the complexity of the AI supply chain. When a company uses an AI system that was developed by a third party, who is responsible for the system’s flaws? The legal framework will need to provide a clear answer to this question, ensuring that there is accountability for the entire AI value chain.

Global Regulatory Approaches

The global response to the ethical challenges of AI is a fragmented but evolving one, with different regions taking fundamentally different approaches to regulation.

- The European Union’s AI Act: The EU is a global leader in AI regulation, with its proposed AI Act representing the world’s first comprehensive legal framework for AI. The EU’s approach is a risk-based model that categorizes AI systems into four tiers.

- Unacceptable Risk: AI systems that pose a clear threat to fundamental human rights are banned. This includes systems that manipulate human behavior, social scoring by governments, and real-time biometric identification in public spaces for law enforcement purposes (with limited exceptions).

- High Risk: This includes AI used in critical sectors like healthcare, law enforcement, and employment. These systems are not banned, but they face strict requirements, including a rigorous conformity assessment, technical documentation, and human oversight.

- Limited Risk: AI systems with limited risk, such as chatbots, are subject to minimal transparency obligations.

- Minimal or No Risk: The vast majority of AI systems, such as spam filters, fall into this category and are subject to no specific legal obligations. The EU’s goal is to establish a global standard for “trustworthy AI.”

- The U.S. Approach: A Patchwork of Laws: The U.S. has adopted a more decentralized, sector-specific, and non-prescriptive approach. It has a number of state-level laws that are addressing AI-related issues, as well as executive orders that are guiding federal agencies. The U.S. approach is largely based on using existing laws, such as civil rights laws, to prosecute companies that use AI in a discriminatory way.

- China’s AI Governance Strategy: China’s approach is characterized by a dual focus: aggressively promoting AI development while imposing strict, top-down governance to maintain social control. It has implemented laws that require algorithms to be transparent and non-discriminatory and has been one of the first countries to regulate generative AI, requiring providers to label synthetically generated content and ensuring that AI is not used to create content that violates social norms or national security.

The Impact on Businesses and Society

The new legal framework for ethical AI has profound implications for businesses and society.

- For Businesses: The new regulations will impose significant compliance costs on companies that develop and use AI. However, for forward-thinking businesses, a commitment to ethical AI can be a powerful strategic advantage. It can build consumer trust, attract top talent, and differentiate a company from competitors in a crowded market. It will also create new roles within companies, such as Chief AI Ethicists and AI Governance Officers.

- For Society: The new legal framework has the potential to create a more equitable and just society. By mandating fairness audits and explainability, it can help to ensure that AI systems are not used to perpetuate existing inequalities. By holding companies accountable for their AI systems, it can help to build public trust in a technology that has the power to transform our world for the better.

Conclusion

The ethical development of AI is the defining challenge of our time. The rapid pace of technological innovation has created a new set of risks that threaten to undermine the very principles of a just and fair society. The legal frameworks that were designed for a different era are simply not equipped to handle the complexities of a technology that is capable of learning, evolving, and making autonomous decisions.

The legal battles that are unfolding today, from the push for new laws in Europe to the ongoing lawsuits in the U.S., are a necessary and vital response to this crisis. They are a recognition that AI is too powerful to be left unregulated. The most successful outcome would be a legal framework that is a delicate balancing act—one that fosters innovation and allows AI to reach its full potential, while also ensuring that it is developed and deployed in a way that respects human rights, protects privacy, and ensures fairness.

The future of AI is not predetermined. It will be shaped not just by its code, but by the legal and ethical frameworks we choose to build for it. The laws we create now will determine whether AI becomes a source of new harms or a tool that serves all of humanity. This is a profound and urgent responsibility, and the ethical pioneers who lead this charge will be the architects of a more just, more equitable, and more sustainable digital future.

Discussion about this post