In the span of a single generation, social media platforms have evolved from niche online communities into the most powerful gatekeepers of public discourse. They have become the digital town squares where billions of people gather to share ideas, debate politics, and access information. But unlike a physical town square, these platforms are owned and operated by private corporations that can, at any moment, unilaterally remove a user or even ban them entirely from their service. This act of “deplatforming”—the removal of a user from a social media or online service—has become a central legal and ethical battleground of the 21st century. It pits a corporation’s right to moderate its own platform against a user’s claim to free speech and has ignited a global debate over who controls the modern digital public square.

The conflict is a direct result of the immense power these platforms wield and the devastating real-world consequences of the content that flows through their networks. From the spread of disinformation that threatens democratic processes to the amplification of hate speech that fuels real-world violence, the harms are now undeniable. In response, a legal reckoning is underway, with lawsuits and legislation challenging the very legal foundations that have shielded these companies from accountability for decades. This article will provide a comprehensive guide to the legal framework that enables deplatforming, the key harms that are driving the push for accountability, the legal challenges being mounted against platforms, and the future of free speech and content moderation in the digital age.

The Digital Public Square and Its Private Owners

The legal and ethical debate over deplatforming is rooted in a fundamental paradox: while social media platforms feel like public spaces, they are legally treated as private companies with a right to set their own rules. The foundation of this legal immunity is Section 230 of the Communications Decency Act (CDA), a landmark U.S. law passed in 1996, long before the rise of the modern social media giants.

- The Core Provision: Section 230 states that “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.” This means that a platform cannot be held liable for the content their users post, even if that content is defamatory, misleading, or harmful. The law was intended to protect a burgeoning internet and to encourage platforms to moderate content without fear of being sued for either removing or not removing something.

- The Consequences: In practice, Section 230 has served as a powerful legal shield, enabling platforms to grow into global behemoths while largely avoiding legal responsibility for the content they host. It has allowed them to adopt a “laissez-faire” approach to content moderation, a business model that is based on maximizing user engagement, even if that engagement is driven by sensational or harmful content.

Major Harms and the Push for Deplatforming

The debate over deplatforming is a direct response to a growing list of societal harms that are widely perceived as having been amplified by platform business models. In the absence of a legal framework for holding platforms accountable, deplatforming has become a primary tool for platforms to respond to public pressure and to mitigate the most dangerous forms of content.

A. Disinformation and Misinformation:

Social media has become a primary vector for the rapid spread of disinformation, from politically motivated fake news and conspiracy theories to dangerous health misinformation. Platform algorithms, designed to maximize user engagement, can inadvertently amplify emotionally charged and false content, creating and reinforcing “echo chambers” that make it difficult for users to discern fact from fiction. This has had real-world consequences on elections, public health, and social cohesion. Deplatforming, in this context, is seen as a way for platforms to stop the spread of dangerous lies.

B. Hate Speech and Extremism:

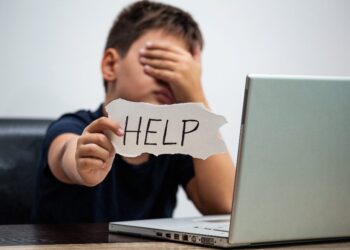

The amplification of hate speech and extremist content is a major concern. Algorithms can serve as a radicalization engine, funneling users down rabbit holes of increasingly extreme content. While platforms have spent billions on content moderation, a large volume of harmful material still slips through the cracks, often with devastating consequences, including real-world violence and targeted harassment. Deplatforming is often used to remove the most egregious offenders, such as white nationalists or other extremist groups, in an effort to reduce the spread of hatred and violence.

C. Incitement to Violence and Real-World Harm:

This is the most critical and least ambiguous reason for deplatforming. When a user uses a platform to directly incite violence or to organize a real-world attack, platforms have a moral and legal obligation to act. In a number of high-profile cases, platforms have deplatformed individuals and groups in response to their role in organizing or encouraging real-world violence. This is often the point at which platforms feel they have crossed a red line and must act.

D. The “Right to Speak” and the “Right to Moderate”:

The debate over deplatforming is a fundamental conflict between two core principles.

- The Free Speech Argument: Deplatforming is viewed by critics as a form of censorship that violates a user’s right to free speech. They argue that platforms, as the new public square, have a moral obligation to remain neutral and to not discriminate against a user based on their political or social beliefs.

- The Private Property Argument: Platforms, and their supporters, argue that they are private companies with a right to set their own rules and to moderate their own content. They contend that they are not a government entity and that a user does not have a “right” to a platform’s service. They argue that platforms have a right to protect their brand and to remove any content that violates their terms of service.

- The Legal Dilemma: The legal dilemma is a profound one. Do we treat platforms as private companies with a right to control their own space, or do we treat them as public utilities with a duty to remain neutral? The answer to this question will determine the future of free speech in the digital age.

Legal Challenges to Deplatforming

While platforms are largely protected by legal immunity, a number of legal challenges are being mounted against them, with lawsuits and legislation aiming to hold them accountable.

- Breach of Contract and Terms of Service: A common legal argument against deplatforming is that a platform has violated its own terms of service. If a platform’s terms of service promise to provide a fair and transparent content moderation process, and a user is deplatformed without due process, they may have a legal claim for a breach of contract.

- Common Carrier and Public Utility Arguments: This is the most significant legal challenge to Section 230. Lawyers and politicians are arguing that social media platforms have grown so large and so central to public life that they should be treated like public utilities, such as a phone company or an electric company. Under this legal theory, a platform would be prohibited from discriminating against a user based on their viewpoint. While courts have largely rejected this argument, it is a key legal battleground.

- State-Level Laws and Executive Orders: In the U.S., a number of states have passed laws that aim to regulate social media platforms and to protect users from deplatforming. States like Florida and Texas have passed laws that prohibit platforms from deplatforming users based on their political viewpoints. These laws have faced significant legal challenges and are currently being litigated in court.

- Defamation and Libel Lawsuits: While Section 230 protects platforms from liability for third-party content, it does not protect a user who posts defamatory content. Lawsuits are being filed against individuals who use platforms to spread false and defamatory information, and these lawsuits are forcing platforms to be more proactive in their content moderation.

Global Regulatory Approaches

The debate over deplatforming is a global one, and different countries and regions are taking a variety of legislative and judicial approaches.

- The European Union’s Digital Services Act (DSA): The EU is a global leader in social media regulation. The DSA is a landmark law that places a new legal obligation on platforms to mitigate risks, including the spread of disinformation and hate speech. It requires platforms to be more transparent about their content moderation policies and to provide a clear and transparent appeal mechanism for users who have been deplatformed. This is a significant move that places a new level of legal accountability on platforms.

- Australia’s “Online Safety Act”: Australia has taken a unique approach to online safety. Its Online Safety Act requires platforms to act on reports of serious online abuse and to take down harmful content. It also gives the government the power to fine platforms that fail to comply. This is a powerful legal framework that is designed to protect users from the most dangerous forms of online harassment and bullying.

- The United Kingdom’s Online Safety Bill: The U.K. is pioneering a new approach based on a “duty of care.” The law would hold platforms legally responsible for protecting users from a range of harms, including the spread of illegal and harmful content. This is a significant move that places a new level of legal accountability on platforms and could have a profound impact on the future of social media.

The Future of Deplatforming

The legal battles over deplatforming are a symptom of a deeper societal reckoning over the nature of our digital world. The future of free speech and content moderation will likely be defined by a new level of collaboration between governments, tech companies, and legal experts.

- New Legal Frameworks: The old legal frameworks are obsolete, and new ones are being forged in real-time. The future may see a new legal classification for social media platforms that is somewhere between a private company and a public utility.

- Decentralized Platforms: The legal challenges to centralized platforms are fueling the rise of decentralized social media, where a user’s content and data are stored on a blockchain, and the platform’s rules are governed by a community of users. This could provide a new model for a free and open internet that is not controlled by a single company.

- Algorithmic Transparency: The debate over deplatforming is also a debate over algorithmic transparency. The future may see new laws that require platforms to be more transparent about how their algorithms work and how they are used to promote or suppress content.

Conclusion

The era of unfettered growth and minimal legal responsibility for social media platforms is coming to an end. The societal harms, from the erosion of democratic norms to the rise of mental health crises among young people, have become too great to ignore. The legal battle over deplatforming is a necessary reckoning for a powerful industry that has reshaped our world in ways we are only just beginning to understand. The debate is not just about laws and regulations; it is a fundamental discussion about the kind of society we want to live in—one where the freedom to connect is balanced with the collective responsibility to ensure a safe and healthy online environment.

The path forward is complex, fraught with legal and philosophical challenges, and will require a deep re-evaluation of the principles that have guided the internet for decades. It will demand that governments, companies, and citizens work together to forge a new social contract for the digital age, one that holds platforms accountable for the content that flows through their services, without stifling the free exchange of ideas that makes the internet so powerful. The innovators who will lead in this new landscape will be those who can design platforms that are not just engaging, but also ethical, transparent, and built on a foundation of trust and safety. The future of our digital public square depends on our ability to navigate this new legal frontier with foresight and determination.

Discussion about this post