The advent of artificial intelligence (AI) has ushered in an era of unprecedented technological advancement, transforming industries and reshaping society at a pace that few could have anticipated. From powering autonomous vehicles and diagnosing diseases to revolutionizing creative work and automating complex financial decisions, AI holds the promise of a more efficient and innovative future. However, as AI systems become more powerful and deeply integrated into our daily lives, a parallel reality is taking shape: a complex and urgent legal frontier. The initial “Wild West” days of unfettered AI development are over. Governments, policymakers, and legal experts worldwide are now grappling with the profound ethical, economic, and societal challenges posed by this technology. The central question is no longer if AI should be regulated, but how.

The challenge lies in a delicate balancing act. Overly burdensome regulation could stifle innovation and cede technological leadership to nations with more permissive policies. Conversely, a lack of comprehensive regulation could expose society to significant risks, including algorithmic bias, privacy violations, security threats, and a dangerous lack of accountability. This article will provide a deep dive into the emerging legal frameworks around the globe, explore the key legal battlefronts, and analyze the strategic implications for businesses navigating this new, highly regulated landscape.

Why AI Needs Regulation

The imperative for AI regulation is rooted in the unique characteristics and inherent risks of the technology itself. Unlike traditional software, AI systems can learn and evolve in unpredictable ways, making their behavior difficult to predict or control.

- Algorithmic Bias and Discrimination: AI systems are only as good as the data they are trained on. If that data reflects existing societal biases—in hiring practices, lending history, or judicial outcomes—the AI will not only learn but also amplify these biases, leading to discriminatory outcomes. This presents a massive legal challenge, as it can perpetuate systemic inequalities in new, opaque ways.

- The “Black Box” Problem: Many of the most powerful AI models operate as “black boxes,” where the exact reasoning behind their decisions is inscrutable, even to their creators. This lack of transparency makes it impossible to explain or challenge an AI’s decision-making process, posing a serious problem for accountability and due process in areas like criminal justice or loan approvals.

- Liability and Accountability: When an autonomous vehicle causes an accident, who is at fault? The AI developer? The car manufacturer? The owner? Existing legal frameworks for negligence and product liability were not designed for an era of machine autonomy. Establishing clear lines of responsibility is a critical, and unresolved, legal challenge.

- Intellectual Property and Copyright: AI models are trained on vast datasets, including copyrighted text, images, and audio. The content they generate can sometimes resemble or even replicate existing works. This raises a host of legal questions: Is AI-generated art copyrightable? Does a model’s training process constitute copyright infringement? This is a new legal battlefront with significant implications for the creative industries.

- Privacy and Data Security: AI systems consume massive amounts of data, much of it personal and sensitive. A lack of robust data governance could lead to large-scale privacy breaches and misuse of personal information, requiring new legal protections to safeguard user data.

Global Approaches to AI Regulation

The absence of a global consensus on AI governance has led to a fragmented but highly revealing landscape, with major powers taking fundamentally different approaches.

A. The European Union’s Risk-Based Model (The AI Act):

The EU is at the forefront of AI regulation, with its proposed AI Act representing the world’s first comprehensive legal framework for AI. The EU’s approach is a risk-based model that categorizes AI systems into four tiers.

- Unacceptable Risk: AI systems that pose a clear threat to fundamental human rights are banned. This includes systems that manipulate human behavior, social scoring by governments, and real-time biometric identification in public spaces for law enforcement purposes (with limited exceptions).

- High Risk: This category includes AI used in critical sectors like healthcare, law enforcement, employment, and education. These systems are not banned, but they face strict requirements. Before being placed on the market, they must undergo a rigorous conformity assessment that includes a review of their risk management systems, data governance, technical documentation, transparency, and human oversight.

- Limited Risk: AI systems with limited risk, such as chatbots or deepfake generators, are subject to minimal transparency obligations. For example, users must be made aware that they are interacting with an AI or that the content is synthetically generated.

- Minimal or No Risk: The vast majority of AI systems, such as spam filters or video games, fall into this category. They are subject to no specific legal obligations under the AI Act, encouraging innovation in these low-risk areas. The EU’s goal is to establish a global standard for “trustworthy AI.”

B. The U.S. Approach: A Patchwork of Laws:

Unlike the EU’s top-down, centralized framework, the United States has adopted a more decentralized, sector-specific, and non-prescriptive approach.

- Executive Orders: The U.S. government has issued executive orders on AI, focusing on safety standards, ethical guidelines, and federal agency use of the technology. These are not laws but directives aimed at promoting responsible development.

- State-Level Legislation: States are taking the lead on a variety of AI-related issues. For example, some states have passed laws regulating the use of AI in hiring decisions, while others are addressing deepfake concerns in political campaigns.

- Agency Guidance: Federal agencies like the Federal Trade Commission (FTC) and the Equal Employment Opportunity Commission (EEOC) are using existing laws to address AI-related harms. The FTC, for example, has warned that it will prosecute companies for unfair or deceptive practices related to biased algorithms. This creates a legal environment where companies must assume they are accountable under existing statutes.

C. China’s AI Governance Strategy:

China’s approach is characterized by a dual focus: aggressively promoting AI development to gain a competitive advantage while simultaneously implementing strict, top-down governance to maintain social control.

- Algorithmic Governance: China has implemented laws that require algorithms to be transparent and non-discriminatory. These laws specifically target recommendation engines and require platforms to provide users with an option to opt out of personalized recommendations.

- Deepfake and Generative AI Rules: China has been one of the first countries to regulate generative AI, requiring providers to label synthetically generated content and ensuring that AI is not used to create content that violates social norms or national security. The government also requires real-name registration for users of these services.

Key Legal Battlefronts and Issues

As regulators slowly build a framework, the courts are already hearing landmark cases that will define the future of AI law. These legal battlefronts are shaping precedents and creating a new class of legal expertise.

- Liability and Accountability: The “black box” nature of AI poses a significant hurdle in liability cases. When an AI makes a wrong decision, who is legally responsible? Lawyers are exploring theories of negligence, product liability, and a new concept of “algorithmic negligence,” where the failure to properly train, test, or audit an AI system could be grounds for a lawsuit.

- Intellectual Property and Copyright: The debate over AI-generated content is in full swing. Artists, writers, and musicians are suing generative AI companies, arguing that the models were trained on their copyrighted works without permission. Courts are being asked to decide whether this training process constitutes fair use and whether the output of an AI can be copyrighted.

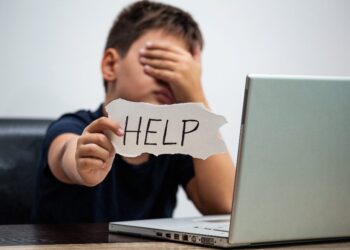

- Algorithmic Bias and Discrimination: Lawsuits are being filed against companies using AI in critical decisions, such as a mortgage lender’s AI that denies loans to a protected class or an HR tool that screens out job applicants based on criteria correlated with race or gender. Lawyers are using existing civil rights laws to challenge these new forms of discrimination, creating a demand for legal experts who understand both law and data science.

- Data Privacy and Security: The sheer volume of data required for AI training raises massive privacy concerns. Legal challenges are emerging around the unauthorized use of personal data for training purposes, especially data scraped from the open web. There is a growing need for legal frameworks that specifically address how AI developers can handle, secure, and obtain consent for the use of personal data.

Practical Implications for Businesses

The new legal frontier is not just a concern for lawyers and policymakers; it has direct and immediate implications for businesses that develop or use AI.

- Proactive Compliance and Governance: Companies must move from a reactive to a proactive compliance model. This means not waiting for a lawsuit to be filed, but instead establishing an internal governance framework for AI. This includes creating AI ethics boards, conducting regular “AI impact assessments” to identify potential risks, and building robust internal documentation to demonstrate compliance.

- The Rise of the Responsible AI Professional: There is a growing demand for a new class of professional—the “AI ethicist” or “responsible AI manager”—who can bridge the gap between technical development and legal compliance. This role involves auditing AI systems for bias, ensuring data is handled ethically, and advising leadership on the legal risks of their AI products.

- A Strategic Advantage: For forward-thinking businesses, demonstrating a commitment to responsible AI can be a powerful strategic advantage. It can build consumer trust, attract top talent, and differentiate a company from competitors in a crowded market. In a world where AI is becoming ubiquitous, trust in the technology will be a key driver of market share.

Conclusion

The legal regulation of artificial intelligence is the defining legislative challenge of our time. It is a complex and monumental task that requires balancing the immense promise of innovation with the fundamental imperative to protect human rights, ensure fairness, and uphold justice. The current fragmented global landscape reflects the initial uncertainty, but as the EU’s AI Act demonstrates, a more structured and comprehensive approach is not only possible but necessary.

The legal journey ahead will be long and complex. We will see more landmark lawsuits that challenge existing legal precedents, more governments racing to pass new legislation, and a growing recognition that this is a global issue that requires international cooperation. The most successful businesses will not view regulation as a burden but as a roadmap for responsible innovation. They will understand that a robust legal framework is the essential foundation upon which a trustworthy, ethical, and ultimately beneficial AI ecosystem can be built. This is not about halting progress; it is about steering the course of a transformative technology to ensure it serves all of humanity. The future of AI will be shaped not just by its code, but by the laws we create to govern it.

Discussion about this post